The Paid AI Fight Is Not About Intelligence. It Is About Rationing.

How to choose between ChatGPT, Gemini, and Claude when the real issue is not model quality, but how fast the limits start working against you.

Borja C

3/10/20265 min read

Most people are buying paid AI the wrong way.

They ask which model is smartest. They compare rankings, screenshots, and benchmark wins. Then they pay £20 a month and assume the problem is solved.

It is not.

The real question is simpler and more useful: which subscription will not let you down when you need it the most?

That is the difference between a nice demo and a tool you trust for daily work.

The models are getting closer. ChatGPT, Gemini, Claude, they alternate in leading benchmarks. Their usage limits are not. That is where the market now divides. Not on intelligence alone, but on what happens when you use the product seriously: long threads, large files, repeated follow-ups, deep research, coding sessions, image generation, voice, and search.

My two cents.

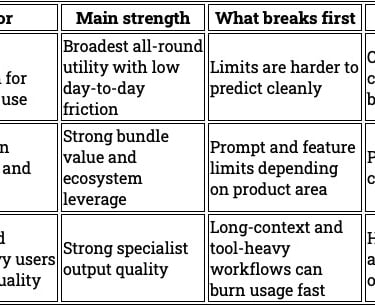

For most people:

ChatGPT is the safest all-round choice, especially if you lean on voice transcription.

Gemini gives the best value if you already live in Google’s ecosystem.

Claude is the strongest specialist, but the easiest to overconsume.

That is the thesis. The rest is just mechanics.

Why this matters?

A paid AI subscription is no longer just software. It behaves more like access to a shared resource under constraints.

Two products can cost the same and feel completely different in practice.

One lets you work without thinking much about usage. Another makes you manage prompts, restart threads, and watch quota bars. A third gives you useful extras outside the model itself, which changes the real value of the subscription.

If you ignore that, you make the wrong choice.

You are not buying abstract intelligence. You are buying usable output before friction.

That is what matters.

The real differentiator: rate-limit shape.

Most discussion around paid AI still focuses on which model is “best.” That is too shallow.

The better lens is rate-limit shape.

How does the product fail when you push it?

What gets expensive first?

What kind of user gets punished fastest?

Those questions tell you more than another leaderboard.

ChatGPT: the best all-round default.

ChatGPT remains the safest default for one reason: for many users, it creates the least day-to-day friction.

That does not mean the limits are perfectly clear. They are not. It does mean the product is usually easier to live with if you do a mix of tasks: writing, summarising, research, brainstorming, coding, voice notes, and general problem-solving.

That matters more than people admit.

Most users do not want to think about quota mechanics. They want to open the app, do the work, and move on.

ChatGPT is strongest there. It is the least mentally taxing paid option for a broad user.

That is why it remains the best default if you want one subscription and do not want to optimise your behaviour around the product.

Gemini: the strongest value play

Gemini is easy to underrate if you only compare models.

That misses the point.

Google is not just selling a model. It is selling a bundle. And for many users, that bundle changes the economics.

If you already use Gmail, Drive, Docs, NotebookLM, and the wider Google stack, Gemini starts doing two jobs at once. It gives you frontier AI access, but it also strengthens tools you already use every day: extra storage on Drive, Fitbit / Google Home premium, integration with Docs, Sheets, and Pages…

That is a different kind of value.

This matters because most people do not use AI in isolation. They use it inside an existing workflow. If your workflow already runs through Google, Gemini can become the most efficient paid choice even if you do not think it is the absolute best model on every task.

That is the right way to look at it.

Not: “Is Gemini the smartest?”

Ask instead: “Does Gemini give me the best useful package for how I actually work?”

For many people, especially Google-heavy users, the answer is yes.

Claude: excellent, but easiest to misuse.

Claude is different.

It often feels excellent on the tasks that matter most to serious users: writing, coding, structure, tone, clarity. It can feel sharp, controlled, and unusually good at turning messy inputs into clean outputs.

That is the attraction.

The problem is not quality. The problem is economics under real usage.

Claude is the easiest paid AI plan to misuse.

Why? Because it punishes sprawl faster than the others.

Long threads, heavy context, large attachments, repeated follow-ups, tasks, connectors, and agentic workflows can all push you into the limits faster than many users expect. The more you treat Claude like a genuine working partner, the more careful you often need to be.

That changes behaviour.

You stop asking one more follow-up. You split work across chats. You compress context manually. You think about whether this task is “worth” the usage. That is a real cost even before the hard limit appears.

In other words, Claude is not just expensive when you pay for it. It can become expensive in attention.

That is why it often feels brilliant at first and restrictive later.

What breaks first in each product.

This is the practical view.

Who holds up under your actual working style.

Why Claude feels harsher.

Claude deserves a closer look because this is where many users get caught.

On paper, a paid AI plan looks simple. In practice, Claude can become more restrictive as your session becomes more useful.

That is the trap.

A short, clean task in a fresh chat can feel cheap. A long working thread with context, revisions, MCP connectors, files, search, or tasks can feel very different. The product does not just answer. It carries the weight of the conversation. It can feel too convenient.

The consequence is predictable.

A casual user might think Claude is generous enough. A power user can hit friction much sooner.

That does not make Claude bad. It makes it less forgiving.

This is the key distinction: Claude rewards discipline, but it punishes drift.

If you are structured, reset context often, and use it with intent, it can be excellent value. If you work in long, messy, evolving sessions, it can become the easiest plan to burn through.

That is not a small detail. It changes whether the product feels liberating or restrictive.

The trade-offs

This is not a simple winner-takes-all market.

Each option has a clear trade-off.

ChatGPT is the best default, but not because it is always best at every task. It wins because it is the easiest to rely on across many tasks without constant quota management.

Gemini gives the strongest bundle economics, but that advantage depends on whether you actually use Google’s ecosystem. If you do not, part of the value disappears.

Claude can produce some of the best work, especially in writing and coding, but it asks more from the user. More discipline. More awareness of context. More willingness to manage the tool instead of just using it.

So the right choice depends less on ideology and more on operating style.

My recommendation

If you want one subscription and do a broad mix of work, leaning heavily on audio, choose ChatGPT.

If your digital life already runs through Google, and you want a more compliant AI, choose Gemini.

If writing or coding quality is your top priority and you are disciplined enough to manage context tightly, choose Claude.

That is the cleanest way to decide.

Conclusion

The paid AI market has changed.

The old question was which model is smartest. That question still matters, but less than people think.

The better question now is which product stays useful when real work begins.

That means:

how fast the limits show up,

what kind of behaviour gets punished,

what extra value comes with the subscription,

and whether the product reduces friction or adds it.

That is how you should choose.

Not by hype.

Not by one benchmark chart.

By operating reality.

Clear takeaway

Do not buy a paid AI plan based only on model quality.

Buy based on this:

ChatGPT if you want the safest all-round default

Gemini if you want the strongest value inside Google’s ecosystem

Claude if you want specialist quality and can manage usage with discipline

The model matters.

But the limit shape decides whether the subscription actually works for you.

Contact

Reach out for AI solutions today

Phone

sales@computelabtech.com

Corporate Email

© 2026. All rights reserved.