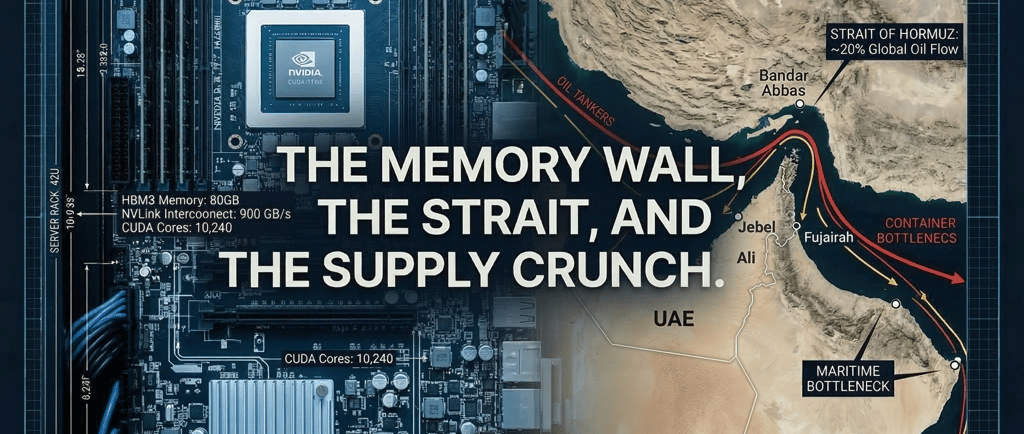

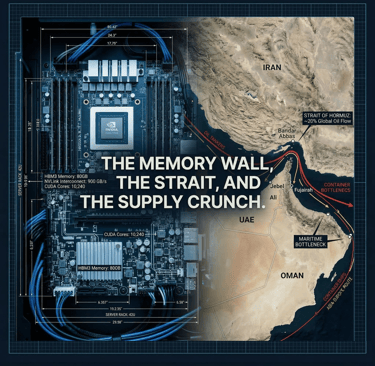

The Memory Wall, the Strait, and the Supply Crunch

How AI Hardware, Geopolitics, and Export Controls Are Reshaping the GPU Market

Borja C

3/15/20269 min read

The past two weeks have delivered a convergence of events that anyone sourcing, selling, or deploying AI hardware needs to understand.

A new generation of inference-optimized models is rewriting the rules on what silicon is needed and why.

The Strait of Hormuz is effectively closed, severing a critical logistics artery for the Middle East and Europe.

And Washington is tightening the screws on GPU exports in ways that will reshape data center buildouts from Tokyo to Rabat.

These developments change the rules for who can get GPUs, what kind they need, and how they reach their destination. If you are not in the USA, the landscape just shifted dramatically.

NVIDIA Nemotron 3 Super: A New Blueprint for Inference Silicon

TLDR;

Nvidia released a new model, 4x faster on Blackwell GPUs, with a 1M token context window that has a better memory footprint. Means higher speed, less cost per token.

On March 11, 2026, NVIDIA released Nemotron 3 Super. The model is a 120 billion parameter hybrid architecture with only 12 billion parameters active during any given forward pass, achieved through a sparse Mixture-of-Experts (MoE) routing design. This is not a marketing distinction. It means the compute cost per token is dramatically lower than a dense model of equivalent quality, while the full 120 billion parameters still need to reside in memory.

What makes Nemotron 3 Super architecturally significant is that it is one of the first major models from a large company to incorporate Mamba-2 layers as a core component of its sequence processing. Mamba is a state-space model that processes sequences in linear time rather than the quadratic scaling of traditional transformer attention. In practical terms, the Mamba layers maintain a compressed hidden state that gets updated as new tokens arrive, somewhat analogous to how human memory works: more recent information is processed with higher fidelity, while earlier context is progressively summarized. This allows the model to support a one-million-token context window without the memory footprint exploding as it would with a pure transformer.

The model also introduces LatentMoE, a novel expert routing mechanism that compresses tokens into a latent space before dispatching them to expert networks, enabling four times as many experts to be activated at the same inference cost. Combined with multi-token prediction for built-in speculative decoding, Nemotron 3 Super is purpose-built for multi-agent agentic workloads where context windows grow rapidly and cost-per-token is the decisive constraint.

Crucially for the hardware market, Nemotron 3 Super is natively trained in NVFP4, NVIDIA's four-bit floating-point format optimized for Blackwell GPUs. The model was trained in reduced precision from the first gradient update, not quantized after the fact. NVIDIA claims this delivers up to four times faster inference on B200 GPUs compared to FP8 on H100. This is a clear signal: the model is designed to drive adoption of Blackwell silicon and to make the Vera Rubin architecture, arriving in the second half of 2026, even more compelling.

The Memory Wall and the SRAM Revolution

TLDR;

SRAM based accelerators have been endorsed by Nvidia with its Groq purchase and expected new chip announcement during GTC, officially endorsing specialized chips for inference.

Nemotron 3 Super's architecture is a direct response to one of the most fundamental constraints in AI inference: the memory wall. As models grow larger and context windows expand, the bottleneck shifts from raw compute to memory bandwidth. Token generation in autoregressive models requires loading the entire set of model weights from memory for every single token produced. On a conventional GPU, this means shuttling data between High Bandwidth Memory (HBM) and compute cores, and the bandwidth of that path sets a hard ceiling on inference speed.

Cerebras has been the most aggressive in challenging this paradigm. Its Wafer-Scale Engine 3 (WSE-3) packs 44 GB of on-chip SRAM with 21 petabytes per second of internal memory bandwidth, roughly a thousand times faster than the HBM on a high-end GPU. By keeping model weights directly on the processor, Cerebras eliminates the data-movement bottleneck entirely. The results are striking: Cerebras has demonstrated over 2,100 tokens per second on 70 billion parameter models and over 3,000 tokens per second on smaller architectures. OpenAI signed a $10 billion deal with Cerebras in January 2026 to deploy its inference infrastructure through 2028, a validation of the SRAM-first approach for latency-sensitive workloads.

NVIDIA's response has been to bring the SRAM approach into its own ecosystem. On Christmas Eve 2025, NVIDIA completed a $20 billion deal to acquire the assets and core team of Groq, the AI inference startup whose Language Processing Units (LPUs) are built around on-chip SRAM for deterministic, ultra-low-latency token generation. During NVIDIA's Q4 2026 earnings call, CEO Jensen Huang compared the Groq integration to the Mellanox acquisition, which transformed NVIDIA's networking capabilities. The plan is to introduce Groq-derived LPU technology as a complementary inference accelerator within the Vera Rubin architecture, with a new rack-scale system known as LPX expected to be unveiled at GTC this month.

The architectural picture that is emerging is one of disaggregated inference: the compute-intensive prefill phase (processing input context) will be handled by Rubin GPUs or the GDDR7-based Rubin CPX chips, while the bandwidth-constrained decode phase (generating output tokens) will be offloaded to SRAM-based LPU accelerators. This separation of concerns mirrors how modern data centers already split training and inference, but takes it a step further by specializing within the inference pipeline itself.

What this means for hardware buyers: The next generation of inference infrastructure is not just about GPUs. Organizations planning data center buildouts should expect a more heterogeneous silicon stack. The demand for Blackwell and Vera Rubin GPUs will remain intense, but new demand for SRAM-based accelerators and specialized prefill chips will emerge in parallel. For brokers in the secondary market, this diversification creates both opportunity and complexity.

The Strait of Hormuz Crisis: A Supply Chain Earthquake

TLDR;

Supply chain disruptions are expected as the Hormuz Strait has officially been closed to all traffic. MENA region expected to be hit the hardest.

On February 28, 2026, the United States and Israel launched coordinated airstrikes on Iran under Operation Epic Fury. Iran retaliated by closing the Strait of Hormuz, one of the world's most critical maritime chokepoints. On March 2, the Islamic Revolutionary Guard Corps formally confirmed the closure and threatened any vessel attempting transit. By March 4, tanker traffic through the strait had dropped to effectively zero. Iran's newly appointed Supreme Leader, Mojtaba Khamenei, stated publicly on March 12 that the closure should continue as a tool of strategic leverage.

The immediate impact has been on energy markets: approximately 20% of global oil supply and a significant share of liquefied natural gas exports from Qatar typically transit the strait. Oil prices have surged more than 10% since the conflict began, and fertilizer prices have spiked as roughly one-third of global fertilizer trade passes through the same waterway.

For the hardware and data center industry, the effects are less direct but significant. The Strait of Hormuz is a key transit route for container shipping between Asia and Europe. Server components, GPU modules, networking equipment, and assembled systems sourced from Asian manufacturers and destined for Middle Eastern or European deployments frequently transit this corridor. With major shipping companies including Maersk and Hapag-Lloyd suspending Mideast routes, and insurers pulling coverage for the region, logistics costs and lead times for hardware deliveries to the Gulf and beyond have increased sharply. The alternative route around the southern tip of Africa adds weeks to delivery timelines.

For data center operators in the UAE, Saudi Arabia, and the broader MENA region, this compounds an already challenging procurement environment. Power disruptions, heightened security premiums, and the physical risk of drone strikes in the Gulf create a new layer of operational uncertainty for any organization running or building compute infrastructure in the region.

U.S. Export Controls: The Tightening Noose on Global GPU Access

TLDR;

USA is preparing a new set of more restrictive rules for buying advanced chips on any meaningful quantity.

Simultaneously, the Trump administration is drafting sweeping new export regulations that would require U.S. government approval for nearly all global shipments of advanced AI accelerators. The proposed framework, reported by Bloomberg on March 5, 2026, creates a three-tier system based on order volume. Shipments of fewer than 1,000 GPUs (of NVIDIA GB300 or equivalent) would face a simplified review process. Orders between 1,000 and 200,000 units would require pre-approval from the Bureau of Industry and Security (BIS) and an export license. And for the largest deployments exceeding 200,000 GPUs, purchasing entities would need to make direct investments in American AI data center infrastructure and submit to potential on-site inspections.

This framework goes well beyond the existing China-focused restrictions. It would apply globally, including to close allies in Europe and Asia. For Middle Eastern and North African markets, which are not classified as preferred U.S. partners, the practical effect is a significant additional barrier to procuring cutting-edge AI hardware at scale. Countries and companies in the MENA region that want to build large GPU clusters will face longer approval timelines, greater regulatory scrutiny, and in many cases, requirements to invest in U.S.-based infrastructure as a prerequisite for access.

The January 15, 2026 BIS final rule already allowed H200 and MI325X exports to China under case-by-case review with a 25% revenue-sharing fee to the U.S. government. The new draft rules would extend the principle of conditioned access to the entire world, effectively giving Washington veto power over who builds large-scale AI infrastructure and where.

The China Factor: From Importer to Potential Exporter

TLDR;

China announced a new round of subsidies to ramp up advanced chip production. It is likely they will become a chip exporter before the end of the decade.

The intersection of export controls and maritime disruption is creating conditions for a structural shift in how regions outside the U.S. source their AI hardware. China's domestic chip ecosystem has been accelerating rapidly. Cambricon Technologies, often called 'China's NVIDIA,' plans to more than triple its AI chip output in 2026, targeting 500,000 accelerators including up to 300,000 units of its Siyuan 590 and next-generation Siyuan 690 processors. Huawei is shipping hundreds of thousands of Ascend AI accelerators annually and plans to bring dedicated AI chip manufacturing plants online. The Chinese government announced a major new AI and semiconductor subsidy program in early March 2026 aimed at achieving full self-sufficiency, adding to over $150 billion already committed through multiple phases of the National IC Fund.

Currently, Chinese chip production is largely consumed domestically. SMIC's advanced process capacity is running at over 95% utilization, with fierce competition for wafer allocation among Huawei, Cambricon, and other domestic designers. But the trajectory is clear: as capacity expands and production economics improve through government subsidies, the possibility of Chinese AI chips entering export markets, particularly in the MENA region, becomes increasingly realistic.

For data center operators in the Middle East and North Africa, this creates a complex but potentially significant alternative supply channel. Chinese accelerators will not match NVIDIA's latest generation on raw performance, and the software ecosystem remains less mature than CUDA. But for organizations facing long regulatory approval processes for American hardware, shipping disruptions through the Strait of Hormuz, and escalating procurement costs, Chinese alternatives may increasingly factor into deployment decisions, particularly for inference workloads where raw performance matters less than cost-per-token and availability.

The Convergence: What This Means for Hardware Procurement

These four threads, the shift toward heterogeneous inference architectures, the Strait of Hormuz closure, tightening U.S. export controls, and the rise of Chinese chip alternatives, are converging to reshape the global GPU and AI hardware market in ways that will persist well beyond any single crisis.

Architectural diversification demands broader sourcing. The era of "just buy NVIDIA GPUs" is giving way to multi-vendor, multi-architecture deployments. Organizations need access not just to H100s and B200s, but to a growing range of specialized inference accelerators, CPX chips, and potentially SRAM-based systems. Brokers who can source across this broader landscape will be better positioned.

Logistics routes are no longer reliable. The closure of the Strait of Hormuz has demonstrated that maritime shipping routes through the Gulf cannot be taken for granted. Hardware buyers in the Middle East and Europe need diversified logistics strategies, including air freight contingency plans and alternative port arrangements through Oman's ports or Red Sea alternatives, though these too carry elevated risk premiums.

Regulatory friction is becoming a permanent feature. The proposed U.S. export framework makes GPU procurement a geopolitical exercise. Organizations outside the United States planning large-scale AI deployments should begin engaging with regulatory compliance processes early and consider structuring purchases to stay below the thresholds that trigger the most intensive review requirements.

The secondary and gray markets will grow. As primary channel procurement becomes more constrained by both regulation and logistics, demand for secondhand, surplus, and authorized-resale hardware will intensify. For hardware brokers, this represents both the core opportunity and a responsibility to maintain strict compliance with export control regulations across all transactions.

Looking Ahead

GTC 2026, running March 16-19 in San Jose, is expected to bring further clarity on NVIDIA's inference strategy, including details on the LPX rack architecture and the full Vera Rubin platform roadmap. We will be watching closely for any developments that affect hardware availability and pricing in the markets we serve.

The situation in the Strait of Hormuz remains fluid and is evolving daily. Iran's new leadership has shown no signs of reopening the waterway, and the conflict's resolution timeline is uncertain. For the hardware supply chain, the prudent assumption is that the disruption will persist for the near term.

At CLT, we continue to monitor these intersecting developments to help our clients navigate sourcing, logistics, and compliance in an increasingly complex environment. Whether you are building a new data center cluster, expanding inference capacity, or looking to source specific GPU configurations, we are here to help find the right hardware through the right channels.

Contact

Reach out for AI solutions today

Phone

sales@computelabtech.com

Corporate Email

© 2026. All rights reserved.